Theoretical Background

Xinyu Dai and Susanne M. Schennach

2026-02-14

theory.RmdThis article provides the mathematical foundation for the bias-bound approach implemented in rbbnp, based on Schennach (2020).

The Bias-Variance Tradeoff

The Challenge

In nonparametric estimation, we face a fundamental tradeoff:

- Large bandwidth: Low variance but high bias

- Small bandwidth: Low bias but high variance

Traditional approaches either:

- Undersmooth: Use smaller bandwidths to reduce bias, but this inflates variance and produces inefficient confidence intervals

- Ignore bias: Use optimal MSE bandwidths but produce invalid confidence intervals

The Solution

The bias-bound approach takes a different path: instead of eliminating or ignoring bias, we bound it. This allows us to:

- Use optimal (MSE-minimizing) bandwidths

- Construct valid confidence intervals that explicitly account for potential bias

- Achieve better coverage without sacrificing efficiency

Mathematical Framework

Fourier Representation

Key Insight

The bias-bound approach exploits the Fourier representation of the bias. For kernel estimators:

where and are Fourier transforms.

Smoothness Detection

The Fourier transform of a smooth function decays polynomially:

where: - is an amplitude constant - measures the smoothness (larger = smoother)

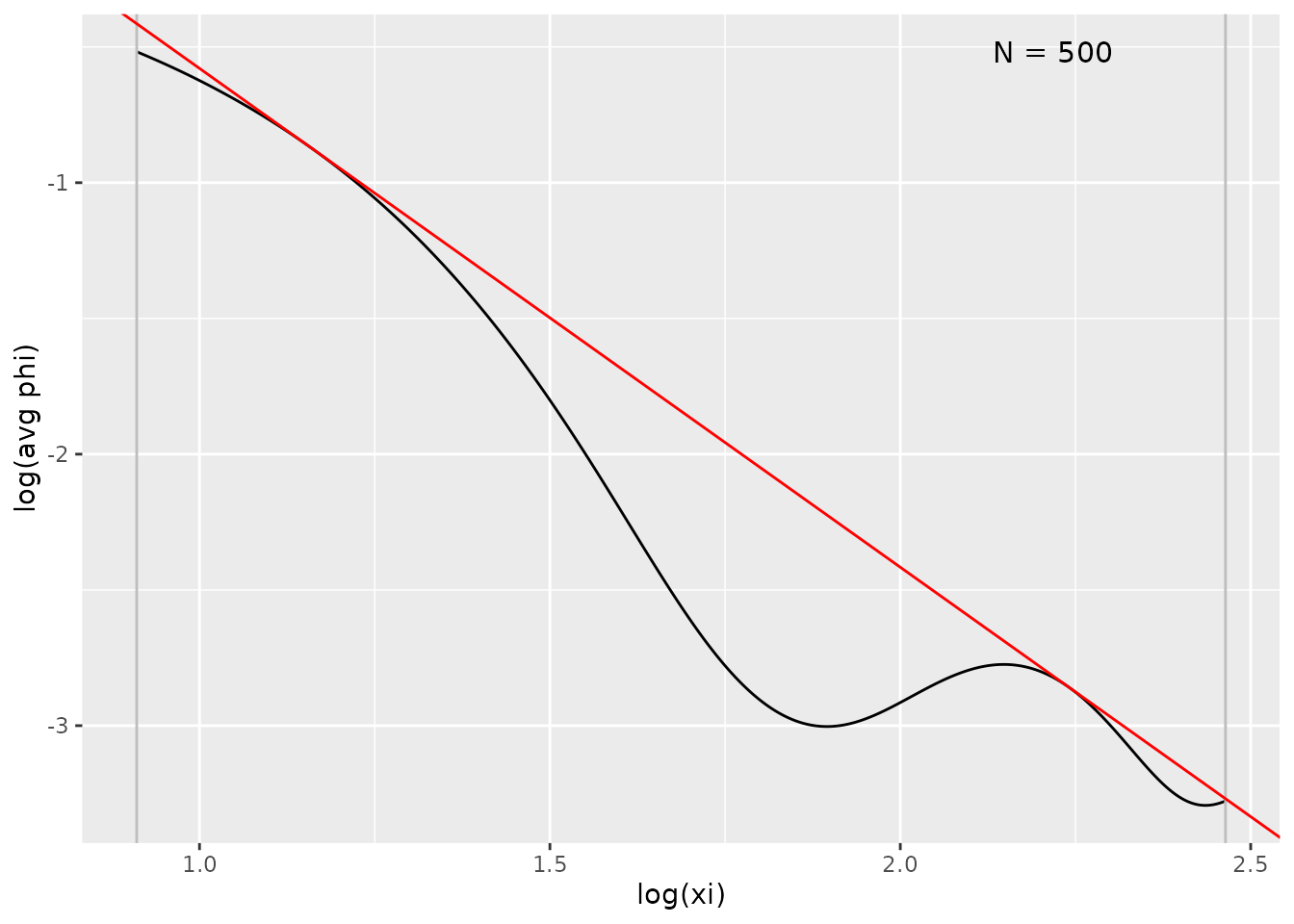

The package automatically detects from the data by fitting the empirical Fourier transform.

# Generate sample data

X <- gen_sample_data(size = 500, dgp = "2_fold_uniform", seed = 42)

# Estimate density

fit <- biasBound_density(X, h = 0.08, kernel.fun = "Schennach2004")

# View detected smoothness parameters

coef(fit)

#> A r h

#> 3.520305 1.837500 0.080000

# Visualize Fourier transform fit

plot(fit, type = "ft")

The plot shows: - Black curve: Empirical Fourier transform magnitude - Red line: Fitted envelope - Grey lines: Frequency range used for fitting

Constructing Bias Bounds

Confidence Interval Construction

Standard CI (Ignoring Bias)

Traditional confidence intervals:

These have incorrect coverage when bias is non-negligible.

Bias-Bound CI

The bias-bound approach constructs:

This accounts for the worst-case bias in both directions.

Visualization

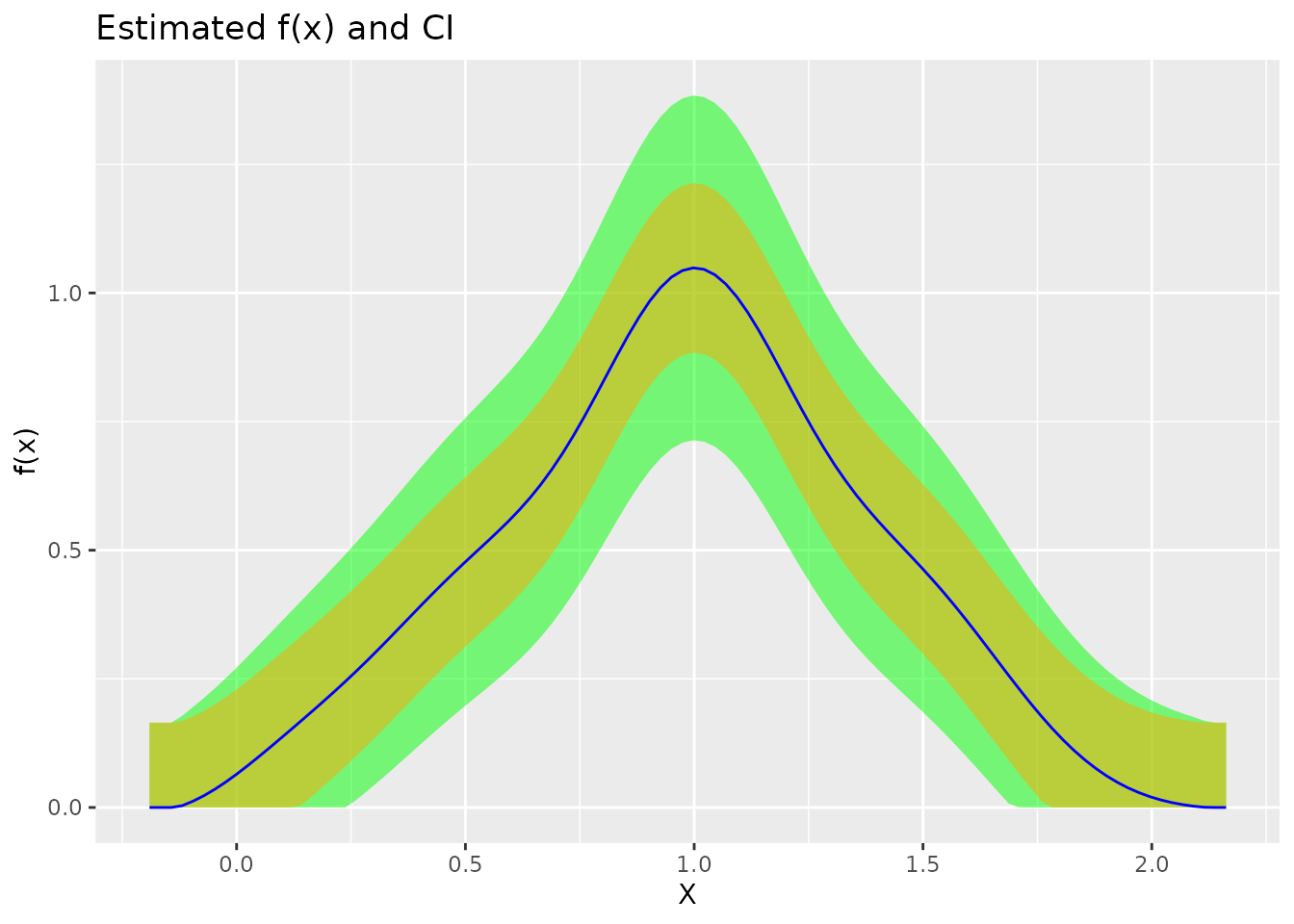

# The plot shows both bands

plot(fit)

In the plot: - Orange band: Bias range - Green band: Full confidence interval including sampling uncertainty

Kernel Functions

Infinite-Order Kernels

For the bias-bound approach, infinite-order kernels are recommended because they satisfy:

This means no bias from frequencies below , simplifying the bias bound calculation.

Available Kernels

| Kernel | Order | Fourier Transform |

|---|---|---|

| Schennach2004 | Smooth transition at | |

| sinc | Sharp cutoff at | |

| normal | 2 | Gaussian decay |

| epanechnikov | 2 | Finite support |

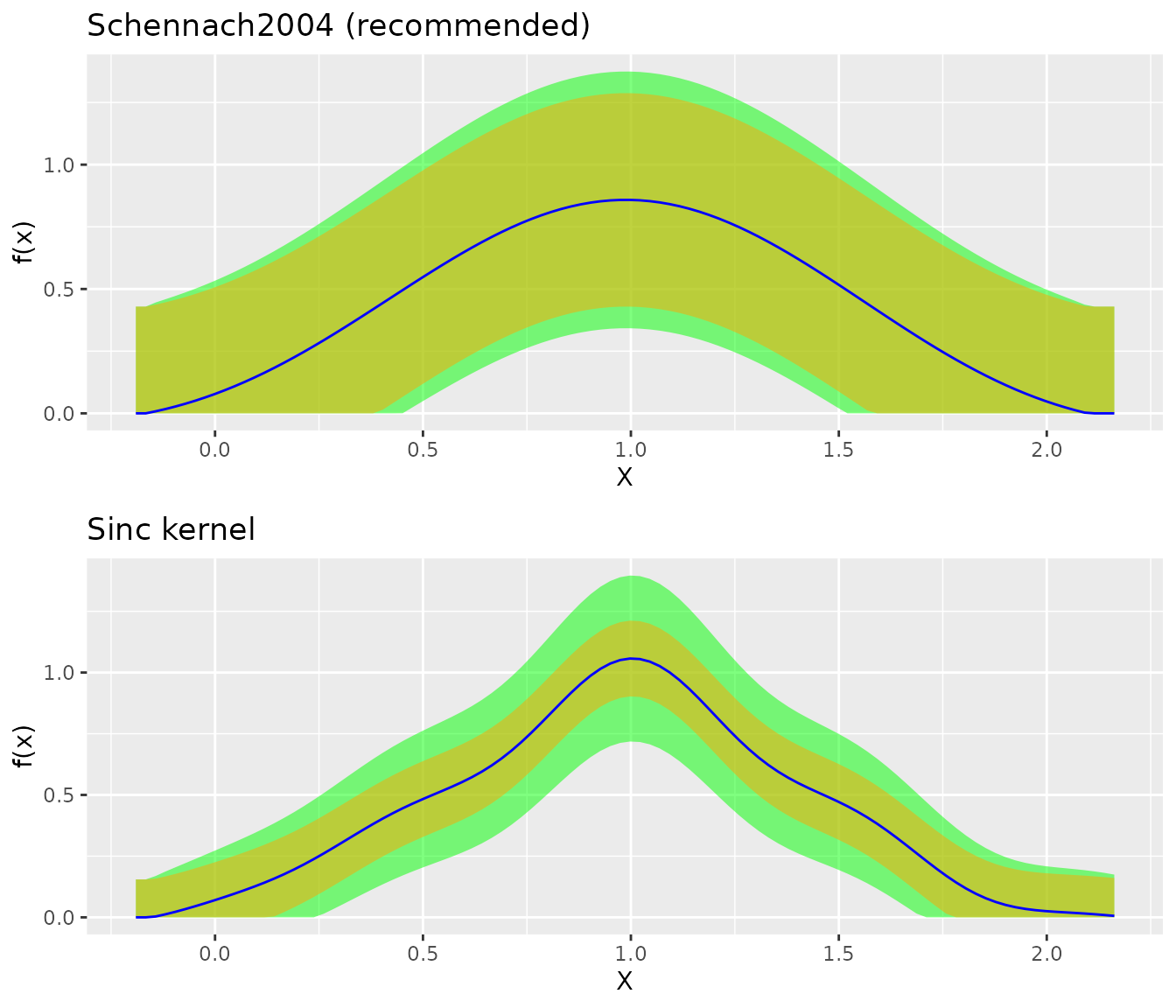

library(gridExtra)

fit_sch <- biasBound_density(X, kernel.fun = "Schennach2004")

fit_sinc <- biasBound_density(X, kernel.fun = "sinc")

grid.arrange(

plot(fit_sch) + ggtitle("Schennach2004 (recommended)"),

plot(fit_sinc) + ggtitle("Sinc kernel"),

ncol = 1

)

Extension to Regression

Conditional Expectation

For regression , the same principles apply. The Nadaraya-Watson estimator:

has bias that can be bounded using the Fourier representation of the conditional expectation function.

Implementation

# Generate regression data

Y <- sin(2 * pi * X) + rnorm(500, sd = 0.3)

# Estimate with bias bounds

fit_reg <- biasBound_condExpectation(Y, X, h = 0.1)

# View smoothness parameters

coef(fit_reg)

#> A r B h

#> 3.5203051 1.8374996 0.6374611 0.1000000Bandwidth Selection

Cross-Validation

The package uses leave-one-out cross-validation to select the MSE-optimal bandwidth:

h_cv <- select_bandwidth(X, method = "cv", kernel.fun = "Schennach2004")

h_silv <- select_bandwidth(X, method = "silverman", kernel.fun = "normal")

cat("CV bandwidth:", round(h_cv, 4), "\n")

#> CV bandwidth: 0.2508

cat("Silverman bandwidth:", round(h_silv, 4))

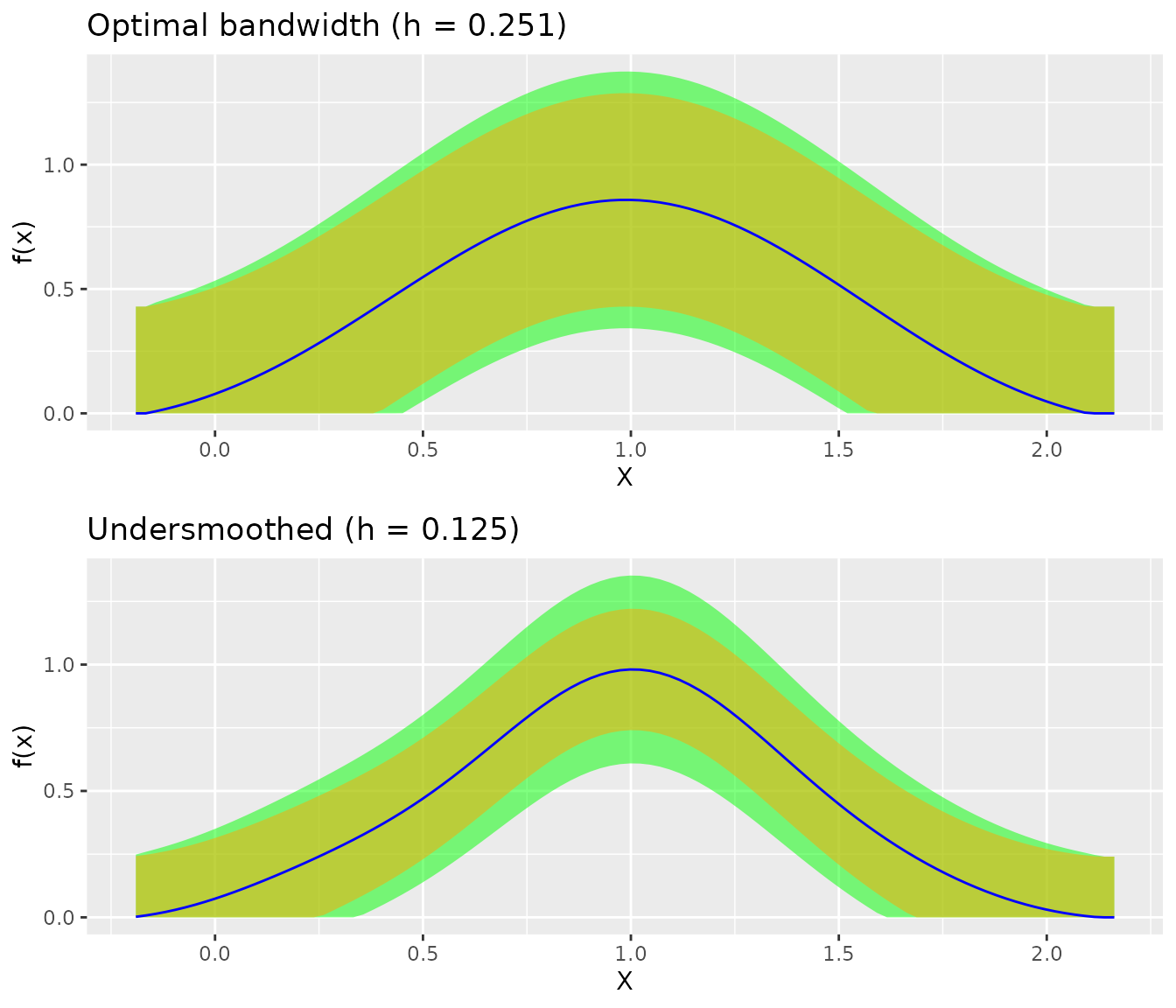

#> Silverman bandwidth: 0.1045Optimal vs. Undersmoothing

Unlike traditional methods, the bias-bound approach uses optimal bandwidths without sacrificing valid inference:

result_opt <- biasBound_density(X, h = h_cv, kernel.fun = "Schennach2004")

result_under <- biasBound_density(X, h = h_cv * 0.5, kernel.fun = "Schennach2004")

grid.arrange(

plot(result_opt) + ggtitle(paste0("Optimal bandwidth (h = ", round(h_cv, 3), ")")),

plot(result_under) + ggtitle(paste0("Undersmoothed (h = ", round(h_cv/2, 3), ")")),

ncol = 1

)

The optimal bandwidth produces narrower confidence intervals while maintaining valid coverage.

Summary

The bias-bound approach provides:

- Valid inference with optimal bandwidths

- Automatic smoothness detection via Fourier analysis

- Explicit bias accounting in confidence intervals

- Efficiency gains over undersmoothing

References

Schennach, S. M. (2020). A Bias Bound Approach to Non-parametric Inference. The Review of Economic Studies, 87(5), 2439-2472. doi:10.1093/restud/rdz065

See Also

- Get Started: Quick introduction

- Density Estimation: Detailed density guide

- Regression: Conditional expectation estimation